Video Translation QA: Step-by-Step Review Process

Last Updated

Jump to section

Jump to section

Share

Share

Share

AI Video Translator, Localization, and Dubbing Tool

Try it out for Free

Your team just localized a product demo and three paid ad creatives. The translations look accurate. The voices sound clear. The timelines meet your launch schedule.

Then the feedback comes in. A regional manager says one phrase feels unnatural. A marketing lead notices the voice runs ahead of the on-screen animation. Performance metrics show strong impressions but lower-than-expected watch time in one market.

This is where Video Translation quality assurance becomes essential.

Video Translation QA is not just about checking grammar. It is a structured review process that ensures Dubbing, timing, tone, and delivery align with your marketing goals. In this guide, you will find a step-by-step QA workflow, performance metrics teams track, and practical ways to refine AI Dubbing before publishing multilingual campaigns.

This guide is for marketing teams, growth managers, and localization leads managing repeatable multilingual content.

Why Video Translation QA matters for Dubbing and Automatic Dubbing?

When teams rely on AI Dubbing or Automatic Dubbing, speed increases. But speed without review introduces risk.

Common issues found during QA:

Timing drift between voice and visuals

Inconsistent terminology across ad sets

Slight tone mismatch in sales messaging

Speaker confusion in multi-speaker videos

These issues are rarely dramatic. They are subtle. But in performance marketing, small friction points affect retention and conversion.

A strong QA process ensures that your Video Translator workflow remains structured and predictable across campaigns.

Step 1: Validate Transcript Quality with a Video Transcriber Check

Every QA process starts with the transcript.

Before reviewing translated output, confirm:

Speaker separation is correct

Sentences are segmented logically

Timestamps do not overlap

Technical terminology is consistent

If your transcript is unstable, Video Translation quality will suffer downstream.

In practice, teams often review the transcript inside a Video Transcriber environment designed for clean timing and speaker structure, making small corrections before moving to the translation stage.

Step 2: Review Script Clarity Using Subtitle & Script Editor Controls

Once translation is generated, open the script view before listening to the dub.

Focus on:

Sentence length and readability

Product names and acronyms

Cultural tone adjustments

Phrasing that feels too literal

A Subtitle & Script Editor allows teams to refine the Video to Text Script without restarting the entire workflow.

This step is critical before running Automatic Dubbing, especially for performance-driven content.

Step 3: Perform Synchronized Playback Review for Dubbing

After script refinement, review the Dubbing output with visuals.

During playback, check:

Does the voice align with on-screen animations

Are feature explanations synchronized with UI highlights

Do speaker transitions feel natural

Multi-speaker content requires additional attention. If Voice Cloning is used, confirm each speaker maintains consistent tone and clarity.

For broader workflow alignment, teams often manage this process within a centralized system such as the multilingual video translation and dubbing platform, which connects transcription, editing, and output review.

Step 4: Conduct Regional Review for Video Translation Accuracy

After technical review, regional stakeholders should verify:

Local terminology accuracy

Cultural tone alignment

Call-to-action clarity

Market-specific messaging adjustments

Even high-quality AI Dubbing benefits from a short native review cycle before launch.

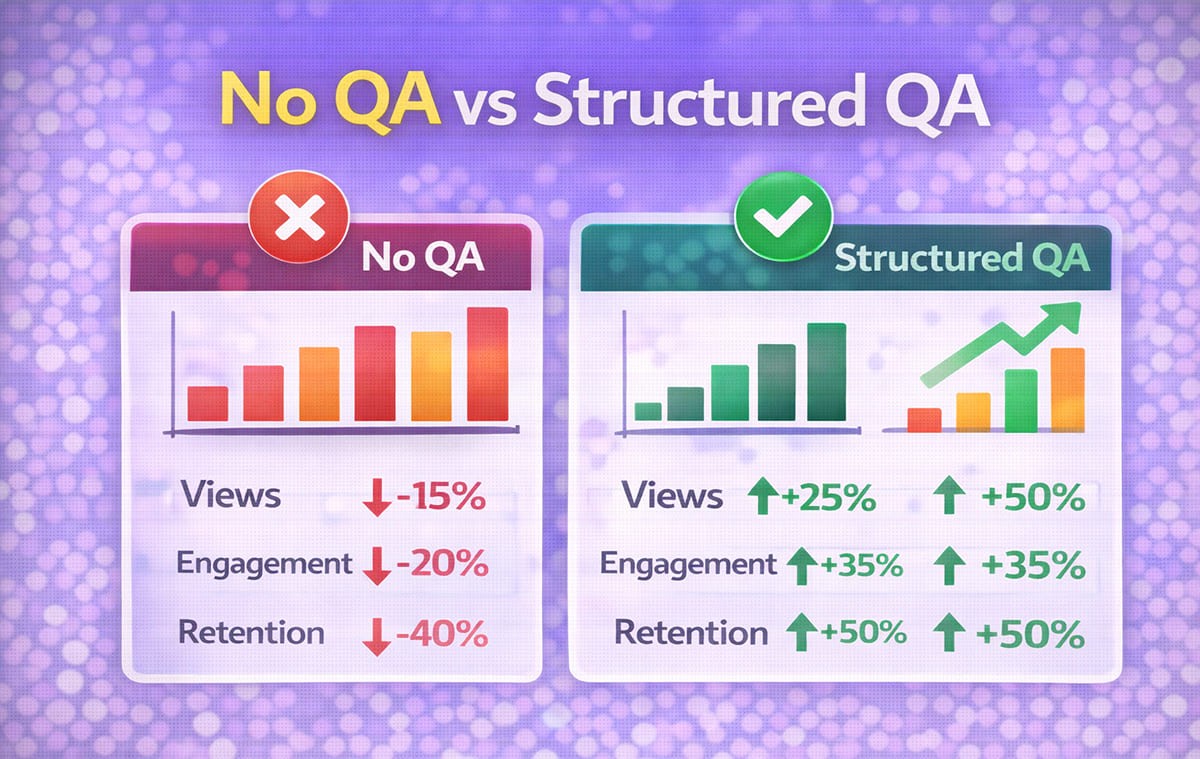

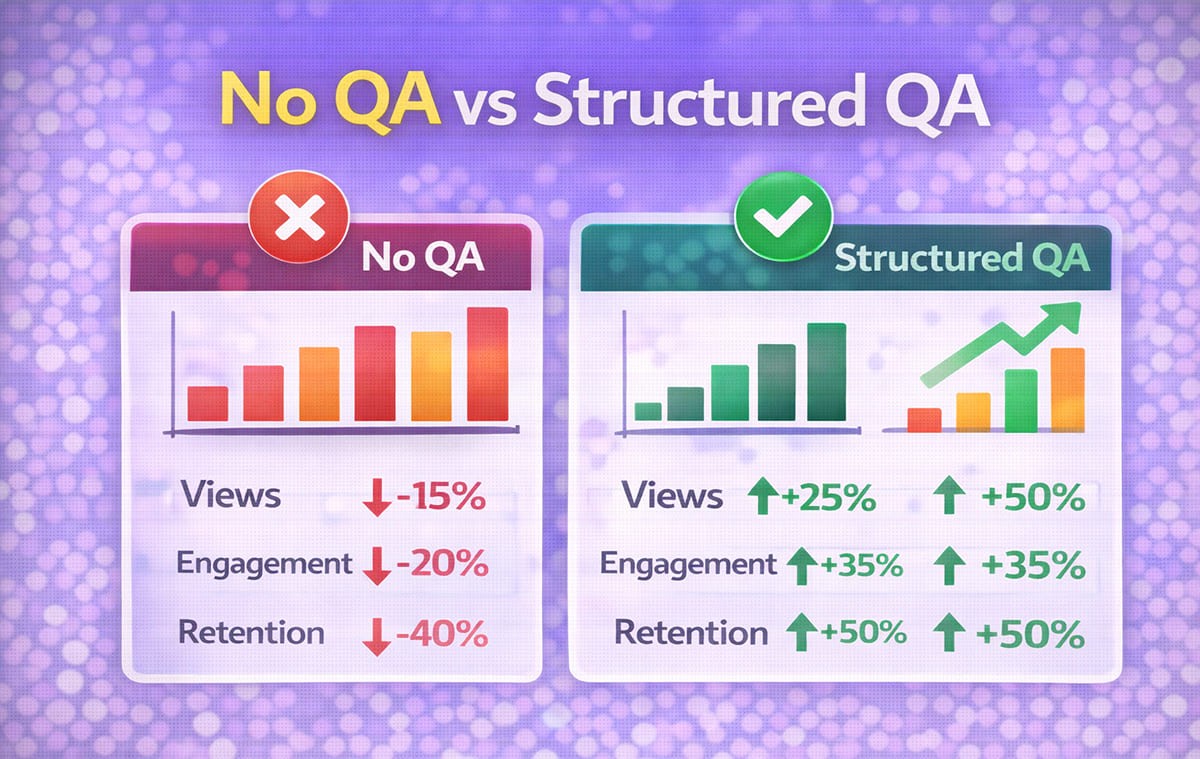

Step 5: Measure Performance Lift and ROI Impact

Video Translation QA is not complete without performance evaluation.

Teams measure:

Watch time across regions

Completion rate differences

Click-through rate changes

CPA variations by language

Regional conversion test results

If one market shows lower retention, revisit:

Script segmentation

Tone adjustments

Voice pacing

Subtitle timing

Video Translation improvements are often incremental. Small script refinements can produce measurable watch time improvements in A/B testing.

Marketing-Specific Workflows Supported by AI Dubbing Systems

Marketing teams need more than basic translation. They need repeatability. Teams evaluating different AI dubbing tools often compare transcription accuracy, editing flexibility, and synchronization features before standardizing a production workflow.

Strong workflows support:

Export control for multiple ad formats

Script refinement across campaign variations

Rapid iteration across ad sets

Consistent terminology across product launches

When managing multilingual ads, teams often structure campaigns around repeatable Dubbing and editing loops. A marketing-focused use case such as AI dubbing workflows for multilingual video ads reflects how timing precision supports ad performance.

Video Translation QA Checklist Table for Teams

QA Stage | What to Review | Why It Matters |

Transcript validation | Speaker structure, segmentation | Prevents drift in Dubbing |

Script refinement | Tone, phrasing, terminology | Improves clarity and sales messaging |

Playback synchronization | Visual alignment with voice | Protects demo credibility |

Regional validation | Cultural nuance and CTA clarity | Increases conversion relevance |

Performance tracking | Watch time, CPA, completion rate | Connects QA to measurable ROI |

This structured approach ensures Video Translation quality supports business outcomes rather than just technical accuracy.

Integrating QA Into Repeatable AI Dubbing Workflows

Instead of treating QA as a final step, high-performing teams embed it inside production cycles.

A typical repeatable loop:

Generate transcript

Refine script

Run Dubbing output

Conduct playback review

Export

Monitor metrics

Iterate if needed

When structured properly, QA does not slow production. It reduces rework and stabilizes Automatic Dubbing performance across campaigns.

For teams refining brand tone consistency, perspectives shared in how AI dubbing maintains consistent voice across markets offer additional context for aligning messaging globally.

Common QA Mistakes Teams Make

Even well-organized teams can overlook small issues when deadlines are tight and multiple languages are involved. Most QA breakdowns are not caused by major errors, but by skipping one validation step that affects Dubbing clarity or Video Translation consistency.

Skipping transcript validation: Errors at the transcript stage compound later.

Reviewing only subtitles without listening: Visual review alone misses tone and pacing issues.

Ignoring performance signals: If watch time drops in one region, QA likely needs refinement.

Treating every video equally: Ad creatives may require tighter QA than internal training content.

Frequently Asked Questions

How long should a Video Translation QA cycle take?

For most marketing videos, structured QA can be completed within one business day if workflows are defined clearly.

Does Automatic Dubbing reduce QA effort?

It reduces manual production time but does not eliminate the need for structured review.

Should every region have a native reviewer?

High-impact campaigns benefit from at least one native review pass before launch.

Is Voice Cloning part of QA?

Voice Cloning is reviewed during playback validation to ensure tone consistency and speaker clarity.

Conclusion

Video Translation QA ensures that multilingual Dubbing delivers both technical accuracy and marketing effectiveness. By validating transcripts, refining scripts, reviewing synchronized playback, and measuring performance lift, teams can build repeatable workflows that support measurable global growth.

Your team just localized a product demo and three paid ad creatives. The translations look accurate. The voices sound clear. The timelines meet your launch schedule.

Then the feedback comes in. A regional manager says one phrase feels unnatural. A marketing lead notices the voice runs ahead of the on-screen animation. Performance metrics show strong impressions but lower-than-expected watch time in one market.

This is where Video Translation quality assurance becomes essential.

Video Translation QA is not just about checking grammar. It is a structured review process that ensures Dubbing, timing, tone, and delivery align with your marketing goals. In this guide, you will find a step-by-step QA workflow, performance metrics teams track, and practical ways to refine AI Dubbing before publishing multilingual campaigns.

This guide is for marketing teams, growth managers, and localization leads managing repeatable multilingual content.

Why Video Translation QA matters for Dubbing and Automatic Dubbing?

When teams rely on AI Dubbing or Automatic Dubbing, speed increases. But speed without review introduces risk.

Common issues found during QA:

Timing drift between voice and visuals

Inconsistent terminology across ad sets

Slight tone mismatch in sales messaging

Speaker confusion in multi-speaker videos

These issues are rarely dramatic. They are subtle. But in performance marketing, small friction points affect retention and conversion.

A strong QA process ensures that your Video Translator workflow remains structured and predictable across campaigns.

Step 1: Validate Transcript Quality with a Video Transcriber Check

Every QA process starts with the transcript.

Before reviewing translated output, confirm:

Speaker separation is correct

Sentences are segmented logically

Timestamps do not overlap

Technical terminology is consistent

If your transcript is unstable, Video Translation quality will suffer downstream.

In practice, teams often review the transcript inside a Video Transcriber environment designed for clean timing and speaker structure, making small corrections before moving to the translation stage.

Step 2: Review Script Clarity Using Subtitle & Script Editor Controls

Once translation is generated, open the script view before listening to the dub.

Focus on:

Sentence length and readability

Product names and acronyms

Cultural tone adjustments

Phrasing that feels too literal

A Subtitle & Script Editor allows teams to refine the Video to Text Script without restarting the entire workflow.

This step is critical before running Automatic Dubbing, especially for performance-driven content.

Step 3: Perform Synchronized Playback Review for Dubbing

After script refinement, review the Dubbing output with visuals.

During playback, check:

Does the voice align with on-screen animations

Are feature explanations synchronized with UI highlights

Do speaker transitions feel natural

Multi-speaker content requires additional attention. If Voice Cloning is used, confirm each speaker maintains consistent tone and clarity.

For broader workflow alignment, teams often manage this process within a centralized system such as the multilingual video translation and dubbing platform, which connects transcription, editing, and output review.

Step 4: Conduct Regional Review for Video Translation Accuracy

After technical review, regional stakeholders should verify:

Local terminology accuracy

Cultural tone alignment

Call-to-action clarity

Market-specific messaging adjustments

Even high-quality AI Dubbing benefits from a short native review cycle before launch.

Step 5: Measure Performance Lift and ROI Impact

Video Translation QA is not complete without performance evaluation.

Teams measure:

Watch time across regions

Completion rate differences

Click-through rate changes

CPA variations by language

Regional conversion test results

If one market shows lower retention, revisit:

Script segmentation

Tone adjustments

Voice pacing

Subtitle timing

Video Translation improvements are often incremental. Small script refinements can produce measurable watch time improvements in A/B testing.

Marketing-Specific Workflows Supported by AI Dubbing Systems

Marketing teams need more than basic translation. They need repeatability. Teams evaluating different AI dubbing tools often compare transcription accuracy, editing flexibility, and synchronization features before standardizing a production workflow.

Strong workflows support:

Export control for multiple ad formats

Script refinement across campaign variations

Rapid iteration across ad sets

Consistent terminology across product launches

When managing multilingual ads, teams often structure campaigns around repeatable Dubbing and editing loops. A marketing-focused use case such as AI dubbing workflows for multilingual video ads reflects how timing precision supports ad performance.

Video Translation QA Checklist Table for Teams

QA Stage | What to Review | Why It Matters |

Transcript validation | Speaker structure, segmentation | Prevents drift in Dubbing |

Script refinement | Tone, phrasing, terminology | Improves clarity and sales messaging |

Playback synchronization | Visual alignment with voice | Protects demo credibility |

Regional validation | Cultural nuance and CTA clarity | Increases conversion relevance |

Performance tracking | Watch time, CPA, completion rate | Connects QA to measurable ROI |

This structured approach ensures Video Translation quality supports business outcomes rather than just technical accuracy.

Integrating QA Into Repeatable AI Dubbing Workflows

Instead of treating QA as a final step, high-performing teams embed it inside production cycles.

A typical repeatable loop:

Generate transcript

Refine script

Run Dubbing output

Conduct playback review

Export

Monitor metrics

Iterate if needed

When structured properly, QA does not slow production. It reduces rework and stabilizes Automatic Dubbing performance across campaigns.

For teams refining brand tone consistency, perspectives shared in how AI dubbing maintains consistent voice across markets offer additional context for aligning messaging globally.

Common QA Mistakes Teams Make

Even well-organized teams can overlook small issues when deadlines are tight and multiple languages are involved. Most QA breakdowns are not caused by major errors, but by skipping one validation step that affects Dubbing clarity or Video Translation consistency.

Skipping transcript validation: Errors at the transcript stage compound later.

Reviewing only subtitles without listening: Visual review alone misses tone and pacing issues.

Ignoring performance signals: If watch time drops in one region, QA likely needs refinement.

Treating every video equally: Ad creatives may require tighter QA than internal training content.

Frequently Asked Questions

How long should a Video Translation QA cycle take?

For most marketing videos, structured QA can be completed within one business day if workflows are defined clearly.

Does Automatic Dubbing reduce QA effort?

It reduces manual production time but does not eliminate the need for structured review.

Should every region have a native reviewer?

High-impact campaigns benefit from at least one native review pass before launch.

Is Voice Cloning part of QA?

Voice Cloning is reviewed during playback validation to ensure tone consistency and speaker clarity.

Conclusion

Video Translation QA ensures that multilingual Dubbing delivers both technical accuracy and marketing effectiveness. By validating transcripts, refining scripts, reviewing synchronized playback, and measuring performance lift, teams can build repeatable workflows that support measurable global growth.

Continue Reading

Browse All

PRODUCT

USE CASE

RESOURCE

ESTsoft Inc. 15770 Laguna Canyon Rd #250, Irvine, CA 92618

PRODUCT

USE CASE

RESOURCE

ESTsoft Inc. 15770 Laguna Canyon Rd #250, Irvine, CA 92618

PRODUCT

USE CASE

RESOURCE

ESTsoft Inc. 15770 Laguna Canyon Rd #250, Irvine, CA 92618